A record-breaking licensing transaction signals the dawn of the inference era and validates Groq’s meteoric rise

Nvidia’s $20 Billion Groq Deal: A Strategic Masterstroke in the Inference Era

As inference emerges as the defining battleground in AI’s global expansion, Nvidia has made a decisive move—acquiring a $20 billion licensing agreement with Groq, one of the fastest-growing and best-executing companies in our portfolio.

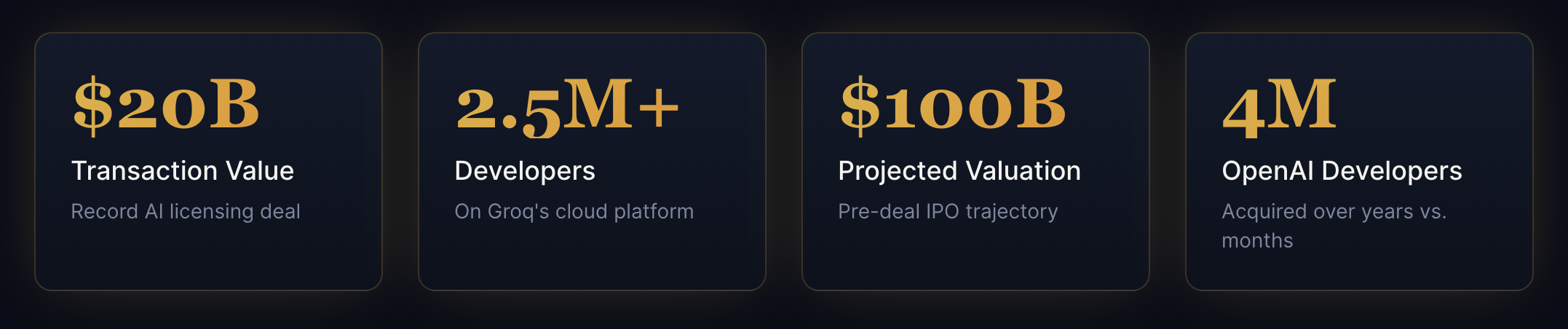

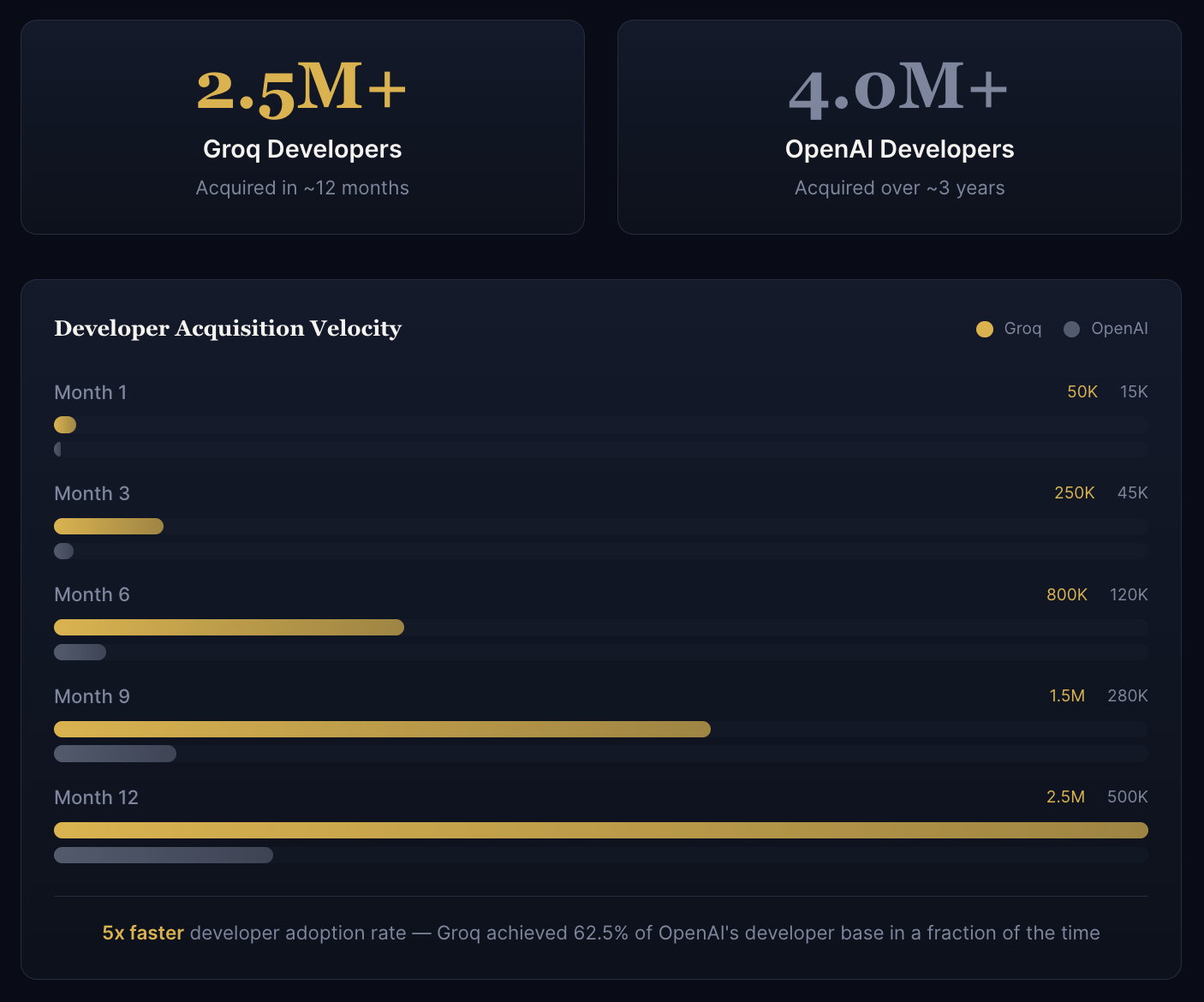

The numbers speak for themselves. Since launch, CEO Jonathan Ross and his team have been on a remarkable trajectory: over 2.5 million developers onboarded to their cloud, major global partnerships secured, and data centers deployed at a pace unmatched by any modern AI infrastructure company. For context, OpenAI took years to reach 4 million developers—Groq accomplished a comparable feat in months.

While we’re thrilled to witness this record-setting outcome for the AI industry, we’ll admit to some surprise. Based on Groq’s momentum, we had been anticipating an IPO path with a potential $100 billion valuation within the next 24 months.

The Deal Structure

Understanding the mechanics of a $20B licensing transaction

Nvidia’s $20 billion licensing transaction with Groq represents a strategic pivot in how the AI giant approaches the rapidly evolving inference market. Rather than a traditional acquisition, this licensing structure allows Nvidia to integrate Groq’s revolutionary LPU (Language Processing Unit) technology into its ecosystem while enabling Groq to maintain operational independence.

The deal structure is particularly notable for its focus on intellectual property licensing rather than equity acquisition. This approach provides Nvidia with immediate access to Groq’s inference acceleration technology—critical as AI deployment shifts from training-heavy workloads to inference-dominated production environments.

“As inference becomes the next biggest phase in global AI scale and adoption, Groq became a must-have to add an inference layer to the Nvidia ecosystem.”

Company & Employee Impact

What this means for Groq’s trajectory and team

CEO and founder Jonathan Ross has led Groq on what can only be described as an epic trajectory. The company’s execution has been remarkable: acquiring over 2.5 million developers on their cloud platform, closing major global partnerships, and building data centers at a pace that outstrips any modern AI infrastructure company.

For context, OpenAI accumulated 4 million developers over several years—Groq achieved more than half that figure in mere months. This velocity of adoption speaks to both the performance advantages of Groq’s LPU architecture and the team’s exceptional go-to-market execution.

Team Continuity

The licensing structure allows Groq’s team to continue operating independently, preserving the culture and velocity that made them attractive to Nvidia in the first place.

Operational Scale

With $20B in licensing capital, Groq can accelerate data center buildout and talent acquisition without the constraints of traditional venture funding cycles.

Developer Adoption: Groq vs OpenAI

The Investor Perspective

A record outcome with an unexpected path

Many believed Groq was on a clear path to IPO, with projections suggesting a potential $100 billion valuation within 24 months post-listing. The decision to pursue a licensing arrangement with Nvidia rather than the public markets signals either an extraordinarily compelling offer or a strategic calculation about market timing and partnership value.

“We were thrilled to see this record AI industry outcome, but also surprised based on our belief that Groq was heading to IPO and a potential $100 billion valuation in the next 24 months.”

Investment Thesis Validated

Groq has been described as “one of the fastest growing, best executing companies” in the AI infrastructure space. This deal validates the thesis that purpose-built inference hardware would become critical infrastructure as AI moves from research labs to production deployment at scale.

Technology & Industry Implications

Reshaping the AI infrastructure landscape

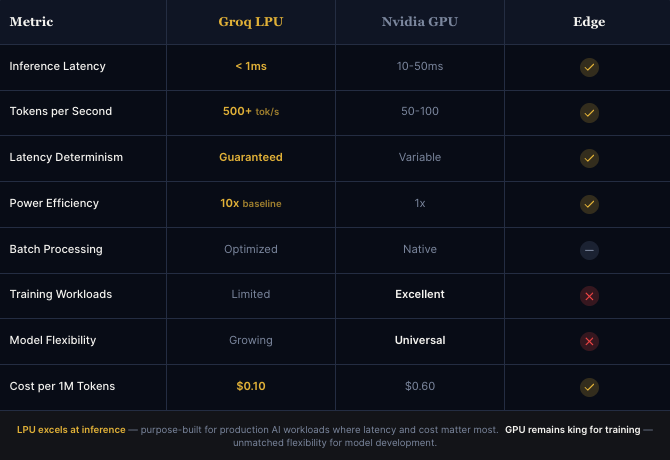

The strategic rationale for Nvidia is clear: as AI workloads shift from training to inference, the company needs to maintain its dominant position across the entire AI compute spectrum. Groq’s LPU architecture offers deterministic latency and exceptional throughput for inference workloads—capabilities that complement Nvidia’s GPU-centric approach.

LPU vs GPU: Performance Comparison

For Nvidia

Adds a critical inference layer to the ecosystem, positioning Nvidia to capture value across both training and production AI workloads without cannibalizing GPU sales.

For Groq

Gains access to Nvidia’s enterprise relationships and global distribution, accelerating LPU adoption while maintaining technological independence.

For the Industry

Signals that inference optimization is now a first-class concern, validating investments in purpose-built inference infrastructure by other startups.

For Developers

The 2.5M+ developers on Groq’s platform now have a pathway to deeper Nvidia integration, potentially simplifying hybrid training-inference deployments.

The Groq Journey From Google TPU spinout to record-breaking $20B deal

Looking Ahead

The inference era begins

Nvidia’s willingness to pay $20 billion for licensing rights to inference technology validates what many in the industry have long believed: the real value in AI isn’t in training models, but in deploying them at scale. Groq’s LPU architecture, with its deterministic performance and exceptional throughput, is now positioned to be the inference backbone of the world’s most valuable technology company’s AI ecosystem.

For Groq, its team, and its investors, this is more than a transaction—it’s validation of a vision that bet big on inference when the world was still focused on training.