Signal intelligence from space leader HawkEye 360 files to IPO with Goldman Sachs

ALSO READ

Space analytics firm HawkEye's revenue jumped 74% in 2025, US IPO filing shows - Reuters

Geospatial analytics firm HawkEye 360 files for NYSE IPO amid defense tech boom - Investing.com

-

Key IPO Details

- Deal Size: The company filed to raise up to $100 million in the offering.

- Lead Underwriters: Goldman Sachs & Co. and Morgan Stanley are serving as the lead book-running managers.

- Pricing & Shares: The specific number of shares and price range have not yet been determined.

- Use of Proceeds: Funds are intended to repay debt and cover a deferred payment for its December acquisition of Innovative Signal Analysis.

www.renaissancecapital.com +6

Financial Performance (2025)- Revenue: Jumped 74% to $117.7 million, up from $67.6 million in 2024.

- Profitability: The firm swung to a net profit of $2.7 million (some sources report a smaller net income of $48,000), compared to a $29 million loss the previous year.

- Backlog: Rose more than six-fold to nearly $303 million.

HawkEye 360, the global leader in signals intelligence data and analytics, today announced that it filed a registration statement on Form S-1 with the U.S. Securities and Exchange Commission ("SEC") relating to a proposed initial public offering of its common stock. The number of shares to be offered and the price range for the proposed offering have not yet been determined.

HawkEye 360 intends to list its common stock on the New York Stock Exchange under the ticker symbol "HAWK."

Goldman Sachs & Co. LLC and Morgan Stanley (in alphabetical order) are acting as lead book-running managers for the offering. RBC Capital Markets, Jefferies, and BofA Securities are acting as additional book-running managers for the offering. Baird, Raymond James, and William Blair are acting as bookrunners for the offering.

The proposed offering will be made only by means of a prospectus. Copies of the preliminary prospectus related to the proposed offering, when available, may be obtained from: Goldman Sachs & Co. LLC, Attention: Prospectus Department, 200 West Street, New York, New York 10282, by telephone at 1-866-471-2526, by facsimile at 212-902-9316 or by email at prospectus-ny@ny.email.gs.com ; or Morgan Stanley & Co. LLC, Attention: Prospectus Department, 180 Varick Street, 2nd Floor, New York, New York 10014.

A registration statement relating to these securities has been filed with the SEC but has not yet become effective. These securities may not be sold nor may offers to buy be accepted prior to the time the registration statement becomes effective. This press release shall not constitute an offer to sell or the solicitation of an offer to buy these securities, nor shall there be any sale of these securities in any state or jurisdiction in which such an offer, solicitation, or sale would be unlawful prior to registration or qualification under the securities laws of any such state or jurisdiction.

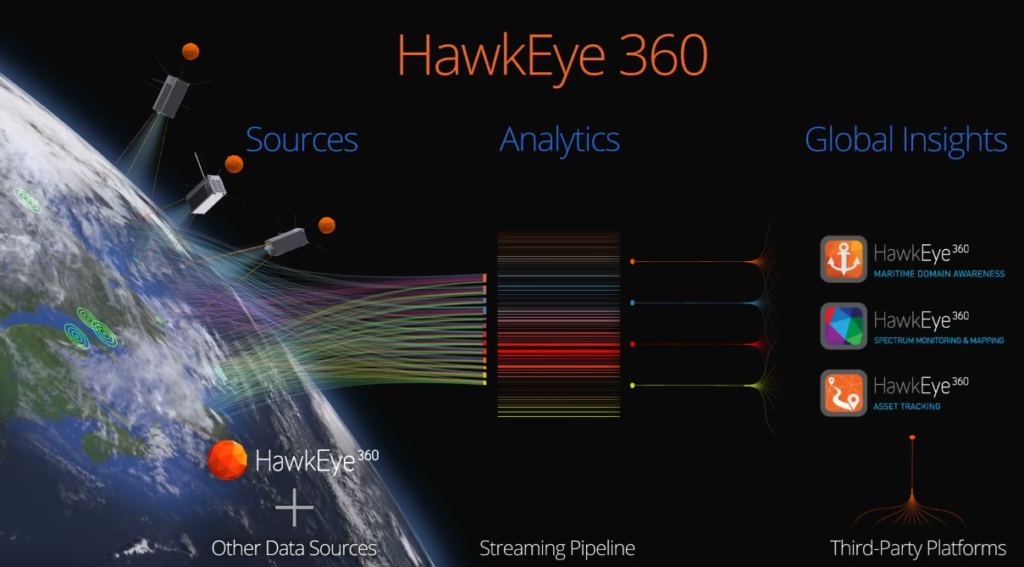

About HawkEye 360

HawkEye 360 is equipping defense, intelligence, and national security leaders with mission-critical signals intelligence to enable faster, better decision-making. By detecting, geolocating, and characterizing radio-frequency emissions worldwide, HawkEye 360 delivers trusted domain awareness and early-warning indicators to the US Government and allied partners. Our space-based collection, proprietary signal processing, and AI-powered analytics transform knowledge of RF spectrum into a strategic advantage. Proven by operational mission success, HawkEye 360 is redefining how signals intelligence strengthens national and global security.

Space Innovator Portal Space Systems raises $50 Million Series A to advance rapidly maneuverable spacecraft capabilities

ALSO READ

This founder helped build SpaceX’s most powerful rocket engine. Now he’s building a ‘fighter jet for orbit.’ - Techcrunch

Portal Space Systems raises $50 million to accelerate spacecraft development - SPACENEWS

Portals Series A round led by Mach33, and Geodesic Capital, with participation from Booz Allen Ventures, ARK Invest, AlleyCorp, and FUSE, reflects growing focus on spacecraft mobility across international defense, civil, and commercial markets

Portal Space Systems, a next-generation spacecraft manufacturer developing rapidly maneuverable vehicles for operations across and between orbital regimes, today announced it has raised $50 million in Series A funding. The round was led by Geodesic Capital and Mach33, with participation from Booz Allen Ventures, ARK Invest, AlleyCorp, and FUSE.

The financing comes as a broader shift is taking hold across the space ecosystem: while access to orbit has improved significantly, the ability to move on orbit remains limited. As orbital activity increases and mission requirements become more dynamic, maneuverability is becoming a defining capability for how space is used, secured, and commercialized.

The composition of the investor group reflects that shift. Geodesic Capital brings an international dimension through its focus on U.S. and Japan technology collaboration. Booz Allen Ventures contributes deep experience in national security and defense systems. Returning investors Mach33, AlleyCorp, and FUSE underscore continued confidence in Portal’s execution and long-term strategy.

“Our customers don’t just need access to space. They need the ability to operate across it,” said Jeff Thornburg, CEO and founder of Portal Space Systems. “The systems that succeed in this next phase of space exploration will be those that can move quickly, deliberately, and repeatedly across and between orbits, and that’s been Portal’s focus since day one.”

Industry Shift Toward Mobility

Most satellites today are designed for fixed mission profiles, with limited maneuverability once on orbit. This approach is no longer effective as space becomes more congested, operationally complex, competitive, and strategically important.

Operators across defense, civil, and commercial markets are increasingly requiring spacecraft that can reposition, adapt to changing mission needs, and extend operational lifetimes.

And increasingly, it is a capability that investors are paying attention to.

“The future of space is dynamic, and that shift is being recognized globally,” said Rayfe Gaspar-Asaoka, Partner at Geodesic Capital. "Portal Space is pairing deep propulsion expertise with advanced spacecraft development built for mobility, reliability, and scale. Geodesic is thrilled to co-lead Portal’s Series A and work alongside Jeff and the team as they continue to expand what’s possible in space.”

Signals From Defense

If the commercial market is beginning to feel the pressure of immobility on orbit, the defense sector has been confronting the challenge for much longer.

The participation of Booz Allen Ventures, an organization deeply embedded in national security and defense systems, adds weight to the concept that maneuverability is not just a commercial advantage, but a strategic necessity.

“Our customers depend on advanced technology to accomplish mission outcomes quickly and efficiently,” said Travis Bales, director of Booz Allen Ventures. “Booz Allen Ventures' investment in Portal Space Systems will advance orbital warfare through the development of rapidly maneuverable spacecraft - something we know our customers need. We remain committed to the space domain and to delivering outcomes to help accomplish the most challenging missions for the U.S.”

Speed and range, long decisive in every other domain, are now entering the basic calculus of space operations.

Since Portal’s Last Fundraise

The Series A follows Portal’s $17.5 million seed round announced in 2025, one of the largest publicly disclosed seed financings in the sector at the time. In the year since, the company has advanced from early development to flight-tested systems, to flight heritage, and expanded operational capability across its spacecraft portfolio.

“We are founder-driven investors, and simply fell in love with Jeff, Ian, and Prashaanth,” said Brendan Wales of FUSE. “Their execution has exceeded our expectations, and we’re proud to continue supporting the company as it enters this next phase of growth.”

Over the past year, Portal has:

- Achieved flight heritage on critical avionics systems, including its flight computer and power system, through the successful launch of its Mini-Nova spacecraft

- Advanced propulsion development, completing ground testing of its HEX thruster and reaching operational performance levels in thermal vacuum conditions

- Progressed its Starburst spacecraft, with Starburst-1 manifested on SpaceX’s Transporter-18 mission in Q4 2026 to demonstrate rendezvous and proximity operations, rapid retasking, and significant orbital maneuverability

- Continued development of its Supernova spacecraft, targeting a 2027 debut mission, with more than 80 percent of its systems shared with Starburst to accelerate development and reduce risk through flight heritage

- Expanded its team to approximately 40 employees, expecting to scale to 100 in 2026

- Initiated development of a 52,000-square-foot manufacturing facility in Bothell, supporting the transition from development to production

- Established early mission partnerships, including work with Paladin Space on maneuverable debris removal solutions, DRAAS, and engagement with commercial customers such as Starlab

These milestones come less than a year after the company’s seed announcement and reflect an accelerated development cadence across both hardware and mission planning.

“Portal Space Systems is building a category-defining company through its ability to unlock a new era of maneuverability in space,” said Brannon Jones, Principal at AlleyCorp. “The team’s deep technical and industry experience across SpaceX, NASA, L3 Harris, and the Air Force Research Lab, is a huge reason why

we see them emerging as the clear leaders to solve one the most critical bottlenecks in the orbital economy of space.”

Commercializing Solar Thermal Propulsion

Portal’s Supernova spacecraft is powered by solar thermal propulsion, a technology previously validated at NASA and Air Force Research Laboratory programs but never commercialized. By combining advances in materials, thermal systems, and deployable structures, Portal is working to bring this capability into operational use, enabling higher delta-v and more flexible mission profiles compared to traditional propulsion systems.

Portal expects to use the Series A funding to accelerate spacecraft development, expand manufacturing capabilities, and support upcoming Starburst and Supernova missions.

As co-lead investor in the round, Mach33 sees Portal’s approach as foundational to the next phase of space infrastructure:

“As investors in deeply technical fields, we only back a founding team when we have extraordinary conviction in the team’s ability to execute technically and commercially. More so when doubling down. We’re proud to lead this round alongside Geodesic and other great co-investors. We are confident that Portal will become the Space Mobility Prime in the near future,” said Aaron Burnett, Group CEO of Mach33.

About Portal Space Systems

Portal Space Systems is a next-generation spacecraft company headquartered in Washington state. The company builds re-deployable, maneuverable spacecraft designed to support defense, civil, and commercial missions on operational timelines. Portal emerged from stealth in 2024, earned STRATFI support, raised one of the largest publicly announced seed rounds in the sector, and was named a Via Satellite “Top 10 Startup to Watch.”

Fintech leader Kafene and Nationwide Marketing Group partner to expand access to Ffexible ownership options for retail customers

Point-of-sale leasing platform, Kafene, that helps retailers offer nonprime customers more flexible purchase options through lease-to-own (LTO) agreements, announced today a new partnership with Nationwide Marketing Group (NMG), the premier membership organization supporting independent retailers across North America.

Unlike traditional LTO companies that operate on a one-size-fits-all approval model, Kafene takes a fundamentally different approach: tiered, performance-based pricing that allows merchants to approve more customers for lower cost, at terms calibrated to each individual's financial profile. Lower pricing drives higher conversion. NMG members are encouraged to integrate Kafene's LTO platform directly into their sales process to offer a wider range of shoppers a realistic path to owning premium appliances, electronics, furniture and home products. The result is a genuine win on both sides of the transaction — independent retailers capture sales they would have otherwise lost and customers leave with the products they need.

"Partnering with Nationwide Marketing Group is a natural fit for us," said Darryl Cole, vice president of sales at Kafene. "They bring an incredible network of independent retailers, and we bring ways to help those retailers serve more customers. Together, we can put the right products in more hands and help NMG members grow."

The timing reflects a broader shift in how consumers approach major purchases. With household budgets stretched and traditional credit increasingly out of reach for a significant share of the population, demand for flexible, transparent alternatives has never been higher. Kafene was built using modern underwriting technology to match pricing to consumer circumstances, driving higher approval rates and lower pricing without sacrificing the performance merchants depend on.

"Independent retailers succeed when they have the right tools to serve every customer who walks through their doors," said Chris Kirk, senior vice president of business & financial services at Nationwide Marketing Group. "Kafene's technology-driven approach to lease-to-own expands the financing options available to our members, helping them convert more opportunities into sales while providing shoppers with a transparent and flexible path to ownership."

The partnership marks a continued expansion of Kafene's retail footprint and deepens NMG's commitment to equipping its members with tools that drive both revenue and customer loyalty. "NMG is a key partner for us within the industry, and we're excited to bring Kafene's innovative approach to leasing to their merchants," said Josh McSpadden, head of business development at Kafene.

About Kafene Kafene is a point-of-sale leasing platform that helps retailers offer nonprime customers more flexible purchase options through lease-to-own (LTO) agreements. Kafene's technology-driven platform delivers high approval rates through tiered, performance-based pricing — giving retailers the flexibility to approve more customers while maintaining the business outcomes that matter. For more information, visit www.kafene.com.

About Nationwide Marketing Group Nationwide Marketing Group is North America's largest, most comprehensive buying group for independent retailers, manufacturers, and professionals who specialize in furniture, bedding, appliances, electronics, custom installation, other around-the-home categories, and more. For over 50 years, NMG has championed the success of the independent channel, providing personalized support to 5,500+ members representing 14,000 storefronts nationwide. Across the industry, NMG is trusted to drive growth, maximize savings, and simplify operations. With transparent performance tracking, forward-thinking strategies, and a deep commitment to the independent channel, NMG helps its members stay ahead of the competition — on their terms. To learn more, visit nationwidegroup.org.

Contacts

For Kafene:

press@kafene.com

For Nationwide Marketing Group:

pr@nationwidegroup.org

Signal intelligence leader HawkEye 360 successfully launches cluster 14 satellites

ALSO READ

HawkEye 360 Expands Board of Directors with Two New Appointments

HawkEye 360 Establishes International Advisory Board to Support Allied Security Partnerships

HawkEye 360 today announced the successful launch and initial contact with its Cluster 14 satellites, deployed aboard SpaceX's Falcon 9 rocket as part of the Transporter-16 rideshare mission. The satellites were inserted into a sun-synchronous orbit (SSO) and are currently undergoing standard commissioning activities.

The addition of Cluster 14 expands HawkEye 360's space-based signals intelligence constellation and supports growing customer missions across defense, maritime, and national security applications. A sun-synchronous orbit enables consistent coverage patterns, supporting global monitoring of radio-frequency activity.

"Each new cluster helps us scale the constellation to meet increasing customer demand," said John Serafini, Chief Executive Officer of HawkEye 360. "Cluster 14 builds on the strong technical foundation of our existing satellites while continuing to advance system performance and reliability."

Cluster 14 includes incremental improvements to onboard processing that help accelerate data processing timelines and improve the efficiency of the company's sensing and analytics platform.

"With each deployment, we continue strengthening the constellation while supporting evolving customer missions," said Todd Probert, Chief Operating Officer of HawkEye 360. "Cluster 14 reflects our continued focus on innovation and operational excellence as we expand the platform."

HawkEye 360 satellites detect and geolocate radio frequency emissions from communications systems, navigation devices, and radar sources. Combined with proprietary signal processing and AI-powered analytics, the platform provides signals intelligence insights to government and allied customers worldwide.

About HawkEye 360

HawkEye 360 is equipping defense, intelligence, and national security leaders with mission-critical signals intelligence to enable faster, better decision-making. By detecting, geolocating, and characterizing radio-frequency emissions worldwide, HawkEye 360 delivers trusted domain awareness and early-warning indicators to the US Government and allied partners. Our space-based collection, proprietary signal processing, and AI-powered analytics transform knowledge of RF spectrum into a strategic advantage. Proven by operational mission success, HawkEye 360 is redefining how signals intelligence strengthens national and global security.

Odys Aviation and Honeywell Aerospace partner to advance Airborne Counter-UAS Defense

Modern threats demand modern solutions, and a new system that provides an airborne defense layer to protect critical infrastructure from drone threats.

We are thrilled to see an incredible 2+2=5 collaboration that advances modern defense systems with Odys Aviation partnering with Honeywell Aerospace Technologies to bring a new airborne counter-UAS solution to the defense tech market. This collaboration builds on more than a year of joint development and systems integration work to adapt Honeywell's SAMURAI Autonomous Airborne platform into Odys Aviation's long-range hybrid-electric VTOL aircraft, Laila.

The result? A new defensive counter-UAS layer that:

• Bridges the gap between ground sensors and missile defense systems

• Reduces reliance on costly kinetic defenses

• Extends protection from the doorstep to the horizon

Honeywell (NASDAQ: HON) announced a collaboration with Odys Aviation, a dual-use aerospace company building hybrid-electric vertical take-off and landing (VTOL) aircraft, to deliver a persistent airborne defense solution designed to protect critical infrastructure and strategic assets from rapidly evolving drone threats.

The collaboration on this counter-unmanned aerial system (C-UAS) builds on more than a year of joint development and systems integration work to adapt Honeywell Aerospace’s Stationary and Mobile UAS Reveal and Intercept (SAMURAI) Autonomous Airborne platform for deployment on Odys' long-range Laila UAV. The effort supports the broader United States national strategy to further strengthen domestic leadership in advanced aviation and accelerate the deployment of American-built drone technologies across defense and critical infrastructure protection missions.

Together, the Laila-SAMURAI system introduces a new defensive layer between ground-based sensors and high-end missile defense systems, reducing reliance on costly kinetic defenses while extending protection coverage across vast and remote areas. This capability is particularly relevant for distributed energy infrastructure, including refineries, pipelines, and offshore production platforms.

"SAMURAI delivers critical counter-UAS capabilities with proven reliability, scalability, and seamless integration into existing defense architectures. By leveraging Honeywell’s long history in avionics, sensors and defense systems, we are enabling C-UAS capabilities that protect farther, respond faster, and operate with minimal downtime." — Matt Milas, president, Defense and Space, Honeywell Aerospace.

"Drone threats have fundamentally changed the economics and operational requirements of air defense,” said James Dorris, CEO of Odys Aviation. “Critical infrastructure and forward-operating locations require persistent protection across large areas and the ability to engage threats at the horizon long before they're at the doorstep. By combining Honeywell’s SAMURAI system with the endurance, runway independence, and onboard power capability of Laila, we're introducing a new airborne defense layer designed for today and into the future."

Laila will serve as the first airborne application of the Honeywell SAMURAI system, and its hybrid propulsion system – compatible with Jet A, Jet A-1, and JP-8 fuels – produces enough power to stay in flight for up to eight hours across a 450-mile range. Laila eliminates the need for dedicated charging infrastructure, enabling rapid deployment in remote, expeditionary, and offshore environments.

Built using model-based systems engineering, SAMURAI provides a turnkey, modular solution that incorporates diverse customer-selected sensors and effectors. The system is compliant with Modular Open Systems Approach standards, supporting interoperability, lifecycle visibility, and long-term sustainment.

About Honeywell Aerospace

Products and services from Honeywell Aerospace Technologies are found on virtually every commercial, defense and space aircraft, and in many terrestrial systems. The Aerospace Technologies business unit builds aircraft engines, cockpit and cabin electronics, wireless connectivity, thermal, power and actuation systems, and more. Its hardware and software solutions create more fuel-efficient aircraft, more direct and on-time flights and safer skies and airports. For more information, visit aerospace.honeywell.com or follow Honeywell Aerospace Technologies on LinkedIn.

Honeywell is an integrated operating company serving a broad range of industries and geographies around the world, with a portfolio that is underpinned by our Honeywell Accelerator operating system and Honeywell Forge platform. As a trusted partner, we help organizations solve the world's toughest, most complex challenges, providing actionable solutions and innovations for aerospace, building automation, industrial automation, process automation, and process technology, that help make the world smarter and safer as well as more secure and sustainable. For more news and information on Honeywell, please visit www.honeywell.com/newsroom.

About Odys Aviation

Odys Aviation designs, develops and manufactures long-range, technologically advanced dual-use VTOL aircraft that solve global challenges across defense, logistics, and passenger travel. The company is pioneering the next generation of VTOL aircraft which use hybrid-electric propulsion systems to deliver the optimal balance between range and payload.

Based in Long Beach, CA, Odys Aviation was launched in 2021 and is led by seasoned engineers and strategists from SpaceX, Airbus, Tesla and the U.S. Department of Defense. The company has $11B+ in signed LOIs to date. Visit www.odysaviation.com for more information.

Nixxy AI (NASDAQ: NIXX) signs infrastructure contract with Telforge to manage an $60,000,000 in telecommunications traffic

Next-generation AI infrastructure company Nixxy, which is advancing telecom and fintech convergence, announced it has signed an infrastructure services agreement with Telforge, a wholly owned subsidiary of FingerMotion (NASDAQ: FNGR). Telforge delivers enterprise-grade, cloud-based communication solutions that enable networks and enterprises to reliably exchange critical information

The twelve-month contract is for Nixxy to potentially manage up to $60,000,000 of FingerMotion’s 2026 revenues through FNGR’s new subsidiary, Telforge, Inc., an acquisition that was signed on March 18, 2026. As part of the engagement, Nixxy will provide the backend operational support, focused on strong commercial outcomes for wholesale voice performance, switch and routing management, wholesale rate negotiations, settlement reconciliation, and third-party invoice validation. All traffic will be run on Telforge’s internal switching platform, which will be scaled to the same traffic levels of Nixxy over the term of the contract.

Under the agreement, Nixxy will receive a fixed monthly service fee. In addition, the Company expects the engagement to generate an estimated incremental operational benefit of approximately $20,000 per month to its existing telecom business, driven by enhanced routing performance and related operating efficiencies.

Mike Schmidt, CEO of Nixxy, stated, “This services agreement proves the robust nature of Nixxy’s software and ability to outsource its infrastructure to other global companies who desire efficiencies and access to our data services. It also adds recurring services revenue, supports further scale in our wholesale operations, and is aligned with our focus on building a more efficient, higher-performing communications infrastructure business.”

Martin Shen, CEO of FingerMotion, states, “By combining Nixxy’s seasoned operations with the Telforge team and adding Telforge’s US customer base to our access to customers in Asia, we are positioned to grow our telecom division to significant revenue milestones in 2026. Nixxy’s alignment with FingerMotion allows us to achieve this without additional capital and high operating costs, which should result in a win-win for both parties.”

About FingerMotion, Inc.

FingerMotion is an evolving technology company with a core competency in mobile payment and recharge platform solutions in China. As the user base of its primary business continues to grow, the Company is developing additional value-added technologies to market to its users. The vision of the Company is to rapidly grow the user base through organic means and have this growth develop into an ecosystem of users with high engagement rates utilizing its innovative applications. Developing a highly engaged ecosystem of users would strategically position the Company to onboard larger customer bases. FingerMotion eventually hopes to serve over 1 billion users in the China market and eventually expand the model to other regional markets.

About Nixxy, Inc. (NASDAQ:NIXX) is a communications and data infrastructure company focused on scaling carrier-grade telecom rails spanning messaging, voice, and automation-enabled workflows. The Company is focused on executing disciplined growth across communications and adjacent ecosystems where infrastructure, identity, and transaction workflows converge.

Filings and press releases can be found at https://nixxy.com/investor-relations

Contact Information:

Investor Contact: Nixxy, Inc.

Investor Relations Email: IR@nixxy.com

Phone: (877) 708-8868

Super intelligence cloud Lambda expands AI factories to include NVIDIA Vera CPUs

Lambda announces expansion in building its Superintelligence Cloud at NVIDIA GTC 2026

Lambda announced NVIDIA Vera CPUs, new Lambda Bare Metal Instances, NVIDIA Photonics, and NVIDIA STX coming to the Superintelligence Cloud.

Lambda is expanding its AI factories to include NVIDIA Vera CPUs to power the software environments behind reinforcement learning and agentic AI, new Lambda Bare Metal Instances on NVIDIA Vera Rubin NVL72 Superclusters, a production-scale NVIDIA GB300 NVL72 Supercluster with NVIDIA Quantum-X Photonics, and our role as an early NVIDIA BlueField-4 STX adopter.

Lambda is an early NVIDIA Vera CPU launch partner

Models no longer just generate responses. They plan, call tools, run code, and interact with software environments in continuous feedback loops. Intelligence now extends beyond the model into surrounding systems, where millions of CPU-based sandbox environments execute actions and return results to GPUs.

For modern agentic workloads, evaluation latency directly affects overall system performance. When sandbox environments fall behind, accelerators must wait for results. Higher per-core CPU performance increases reinforcement learning iterations per GPU hour and improves agent responsiveness, maximizing AI factory throughput across training and inference.

NVIDIA Vera—high-density CPU capacity for AI factories:

- 88-core CPU with high single-thread performance, tuned for latency-sensitive tasks

- Spatial multi-threading increases agentic inference and RL sandbox density

- Up to 1.5 TB of LPDDR5X memory capacity and 1.2 TB/s bandwidth configurations

- Up to 1.8 TB/s CPU-to-GPU connectivity, reducing PCIe bottlenecks

The development of modern models involves both long training runs and millions of short evaluations. NVIDIA Vera reduces evaluation time, increases the density of sandboxes per rack, and stabilizes per-core throughput. The result is repeatable behavior when you scale experiments into production.

Lambda is an early NVIDIA BlueField-4 STX partner

While the industry is shifting toward agentic AI, long-term memory and the processing of massive context windows are critical bottlenecks in inference. NVIDIA STX is a modular reference architecture for rack-scale AI storage, accelerating advanced inference through next-generation hardware integration and optimized KV-cache management.

Lambda is an early NVIDIA STX adopter, so the storage layer never becomes the bottleneck for frontier-scale GPU clusters:

- Context memory at scale: Up to 5x higher tokens per second and 5x greater power efficiency than traditional storage.

- Acceleration at every layer: Full cluster integration of NVIDIA Vera CPUs, Rubin GPUs, BlueField-4 DPUs, and Spectrum-X Ethernet networking for data center-scale workloads.

- Foundation for AI-native data platforms: High-speed data access for context memory, enterprise data, and high-performance storage use cases.

NVIDIA STX-based platforms will be available in the second half of 2026, along with our NVIDIA Vera Rubin NVL72 Superclusters.

Lambda Bare Metal Instances on NVIDIA Vera Rubin NVL72 Superclusters

Today, Lambda is announcing Bare Metal Instances on Superclusters with NVIDIA Vera Rubin NVL72.

For teams running large-scale foundation model training and complex distributed workloads, such as disaggregated inference, direct hardware access matters. Virtualization overhead is not theoretical at this scale. It compounds. Bare metal removes that layer entirely, while Lambda's Bare Metal Instances provide cloud usability.

What Lambda Bare Metal Instances give you

- Higher performance with no hypervisor overhead

- Faster access to the newest compute as it becomes available

- Full control over the hardware stack with no shared neighbors

- Complete security oversight from the firmware layer up

What we built differently

- One-to-one mapping between instances and physical hosts, with API parity for lifecycle operations. You get direct access to CPU, GPU, memory, and local storage while managing instances the same way you manage cloud VMs.

- With no hypervisor mediating device access, workloads run directly on the underlying hardware, and your processes communicate directly over sixth-generation NVIDIA NVLink for scale-up and NVIDIA Quantum-X800 InfiniBand for scale-out. All-reduce, tensor parallel, and disaggregated prefill-decode traffic run over the raw fabric.

- When a host degrades or fails, instance mobility moves your workload to healthy hardware without manual intervention, enabling faster recovery.

You get the performance of raw bare-metal servers, with programmatic provisioning, predictable maintenance, and observability built for production ML.

Learn more at Maxx Garrison’s session about what deployment will look like for Lambda's Bare Metal Instances with NVIDIA Vera Rubin NVL72 and NVIDIA GB300 NVL72, covering what rack-scale readiness actually requires.

Lambda's NVIDIA GB300 NVL72 Supercluster with NVIDIA Quantum-X Photonics

At scale, the fabric is the system. You can’t bolt a high-performance network onto a rack that wasn’t engineered for it from the start.

Lambda is leading one of the largest deployments of NVIDIA Quantum-X InfiniBand Photonics co-packaged optics switches to date, in an AI factory with 10,000+ NVIDIA GB300 GPUs. CPO switches eliminate the bandwidth bottleneck between racks and change the performance-per-watt calculus at cluster scale. We announced our work on NVIDIA CPO and next-generation networking fabrics in 2025. Now it’s running in production.

.png?width=1600&height=1200&name=quantum-3-switch-system-q3450-photo-top-down-els-blk-bg%20(1).png) What Lambda engineered for

What Lambda engineered for

- Rack-first design: Power and liquid cooling are planned so racks run at sustained utilization without thermal or electrical surprises when jobs push the system hard.

- Photonics fabric: NVIDIA Quantum-X InfiniBand Photonics CPO switches lower power, increase bandwidth, and improve resilience. That raises cluster-level bisection bandwidth and reduces energy per unit of useful work.

- Validated NVIDIA GB300 NVL72 scale: Lambda hosts NVIDIA GB300 NVL72 clusters at the scale required for frontier training while preserving deterministic fabric behavior across the full job.

This Supercluster is built to run large NVIDIA GB300 NVL72 jobs repeatedly and reliably. That reduces surprises and lowers the cost per useful result.

Bringing it together: Lambda's full-stack validation

Lambda validates the full stack before handing over clusters: production firmware, drivers, and orchestration are tested as a single unit. Then follow a pilot-to-production rollout so capacity, software, and operations arrive together:

- Small-scale NVIDIA PODs to validate sandbox density and CPU-to-GPU connectivity before full-scale deployment

- Phased rollouts so software and tooling scale alongside capacity

- Out-of-band telemetry and DPU-based controls to monitor and manage fabric without adding noise or removing resources from AI workloads

The system runs the same jobs repeatedly with minimal manual intervention. That’s how infrastructure moves from working in a lab to running in production.

NVIDIA Vera CPU delivers predictable CPU throughput. Rack-first engineering and photonics networking make the fabric scalable. Bare Metal Instances give teams control without operational overhead. Together, they make the Superintelligence Cloud a platform teams can trust in production.

Lightmatter launches photonics architecture initiative with industry leaders in the Open Compute Project for Co-Packaged Optics

The open specification and ecosystem collaboration aims to facilitate AI infrastructure transition to integrated optics for hyperscalers

Lightmatter, today announced a new collaborative initiative within the Open Compute Project (OCP) to create open specifications for a shared reference architecture enabling interoperable Co-Packaged Optics (CPO) in next-generation AI systems. The announcement, in conjunction with the submission of a white paper titled "Open Collaboration for CPO-Enabled AI Systems," will initiate the project. This proposal underscores Lightmatter’s commitment to advancing AI infrastructure in open collaboration with ecosystem leaders, including Celestica, Corning Incorporated, Dell Technologies, Inc., Flex, Foxconn Interconnect Technology, Hyve Solutions, Keysight, Qualcomm Technologies, Inc., and Quanta Cloud Technology.

Lightmatter, today announced a new collaborative initiative within the Open Compute Project (OCP) to create open specifications for a shared reference architecture enabling interoperable Co-Packaged Optics (CPO) in next-generation AI systems. The announcement, in conjunction with the submission of a white paper titled "Open Collaboration for CPO-Enabled AI Systems," will initiate the project. This proposal underscores Lightmatter’s commitment to advancing AI infrastructure in open collaboration with ecosystem leaders, including Celestica, Corning Incorporated, Dell Technologies, Inc., Flex, Foxconn Interconnect Technology, Hyve Solutions, Keysight, Qualcomm Technologies, Inc., and Quanta Cloud Technology.

The exponential increase in AI workloads has exposed a critical bottleneck in electrical interconnects, which is now driving the industry toward CPO solutions to scale next-generation AI infrastructure. CPO offers a solution by integrating photonics directly with silicon, providing massive increases in bandwidth that contribute to large gains in compute, power and space efficiency. However, the complexity of CPO systems presents challenges around integration, interoperability, reliability and scaling across a diverse supply chain. This project directly addresses the need for collaborative open standards that will enable a robust ecosystem, high-volume production and seamless integration of CPO in hyperscale data centers.

"The AI revolution is at a pivotal moment, and we must ensure interoperable solutions that can be produced and deployed at scale across the industry’s diverse ecosystem," said Nick Harris, Ph.D., Founder and CEO at Lightmatter. "We believe the answer is in open collaboration. By working with the OCP community, we can define the standards that will unlock the full potential of CPO, enabling a vibrant ecosystem across the hyperscaler supply chain. We are already seeing strong support from industry leaders who recognize the urgency of this effort."

This collaboration proposes to bring together AI system architects, advanced manufacturing partners, and networking leaders to align on a shared vision and roadmap for CPO. The goal is to develop open standards for components and systems and to establish a framework for interoperability testing and certification.

Analyst Perspective from Dell’Oro: "The rapid growth of AI workloads is creating immense pressure on the entire data center stack, with the interconnect fabric emerging as a major bottleneck," said Sameh Boujelbene, Vice President, Market Research at Dell'Oro Group. "The industry has long recognized the critical role of Co-Packaged Optics in addressing power, performance, and density challenges. An open, collaborative initiative like the one proposed by Lightmatter is crucial to bridging the technological and supply chain gaps that have hindered widespread adoption, accelerating the path to next-generation AI infrastructure."

This initiative is already generating significant interest and support among leading ecosystem vendors and experts who recognize the importance of open, interoperable CPO-enabled AI infrastructure:

Celestica: "Celestica is a long-standing participant in the OCP community, we support industry collaboration to advance open networking and hardware solutions," said Randy Clark, VP, System Design Engineering, Celestica. "Industry efforts exploring areas such as open standards for co-packaged optics contribute to the ongoing work supporting the next generation of high-performance, open AI infrastructure."

Corning Incorporated: “Co-Packaged Optics represents a fundamental shift in how optical connectivity is designed, manufactured, and deployed for large-scale AI systems,” said Benoit Fleury, Photonics Connectivity Solutions Commercial Director at Corning Optical Communications. “Successfully deploying CPO at scale will require tight integration of photonic materials, optical fibers, high-density connectivity, advanced packaging, and system-level design, all delivered with the performance and reliability that hyperscalers demand. Corning supports this OCP initiative to define open-reference architectures and specifications that enable broad interoperability and manufacturability across the ecosystem as it transitions from pluggable to integrated optics for next-generation AI infrastructures.”

Flex: "Delivering next-generation AI infrastructure requires a resilient, transparent supply chain and strong industry collaboration,” said Rob Campbell, President, Communications, Enterprise and Cloud at Flex. “As a longstanding contributor to the Open Compute Project, Flex is dedicated to advancing open standards that strengthen the data center ecosystem. Supporting this initiative builds on our close collaboration with customers on CPO, accelerating interoperability and certification frameworks that are essential to the widespread deployment of CPO-based systems globally.”

Foxconn Interconnect Technology, LTD: "As a leading provider of advanced IT infrastructure for GPU-accelerated computing, Foxconn understands the immense pressure on data center interconnects," said Joseph Wang, CTO at Foxconn Interconnect Technology. "The lack of standardization in Co-Packaged Optics is a significant hurdle for widespread adoption. We believe that by joining this OCP initiative, we can help define a common framework that will accelerate the development and deployment of CPO solutions, enabling our customers to build more powerful and efficient AI systems."

Hyve Solutions: "As exponential increases in AI training and inference workloads push up against existing interconnect limits, Co-Packaged Optics presents an opportunity for a more efficient and unified scale-up and scale-out network architecture,” said Winnie Lin, VP of Engineering at Hyve Solutions. “By contributing to this OCP reference architecture, we are helping to build an interoperable framework that enables hyperscalers to deploy more scalable, energy-efficient compute clusters with the performance and density required to drive the next wave of AI model innovation."

Keysight: "As AI workloads push the boundaries of data center infrastructure, the industry requires aligned approaches to ensure seamless interoperability and performance at scale,” said Dr. Joachim Peerlings, Vice President and General Manager at Keysight's Networks and Data Center Group. “Keysight is proud to support OCP's AI Infrastructure Standards initiative, bringing our deep expertise in high-speed electrical and optical validation and emulation to this collaborative ecosystem. By establishing robust, open standards for next-generation interconnects, we are helping innovators mitigate risk and accelerate the path from CPO design to optically connected zettascale computing."

Qualcomm Technologies: “As Qualcomm Technologies continues to scale its high-performance, power-efficient compute architecture from the edge into the hyperscale data center, the need for a collaborative and open interconnect framework has never been greater,” said Tony Chan Carusone, Technology Executive, Qualcomm Technologies, Inc. and Former CTO of Alphawave Semi, a Qualcomm company. “A shared reference architecture for Co-Packaged Optics is essential for fostering a diverse ecosystem that can deliver the interoperability and scalability required for the next generation of AI infrastructure.”

Quanta Cloud Technology (QCT): "QCT is committed to providing powerful and efficient open infrastructure for AI-enabled data centers," said Mike Yang, President of QCT. "We are pleased to support this collaborative initiative to standardize Co-Packaged Optics, which will accelerate the development of more powerful, scalable, and efficient solutions for our customers and the broader AI community."

Lightmatter invites other industry leaders and system architects to join the initiative and help shape the future of AI interconnects. We are prepared to contribute our expertise and actively engage with standards bodies–including the OIF, IEEE, and OCP–and with MSAs, including XPO and OIP, to accelerate the adoption of this transformative technology.

To learn more or to join this Open Collaboration for CPO-Enabled AI Systems project, please contact our team at ecosystem@lightmatter.co.

About Lightmatter

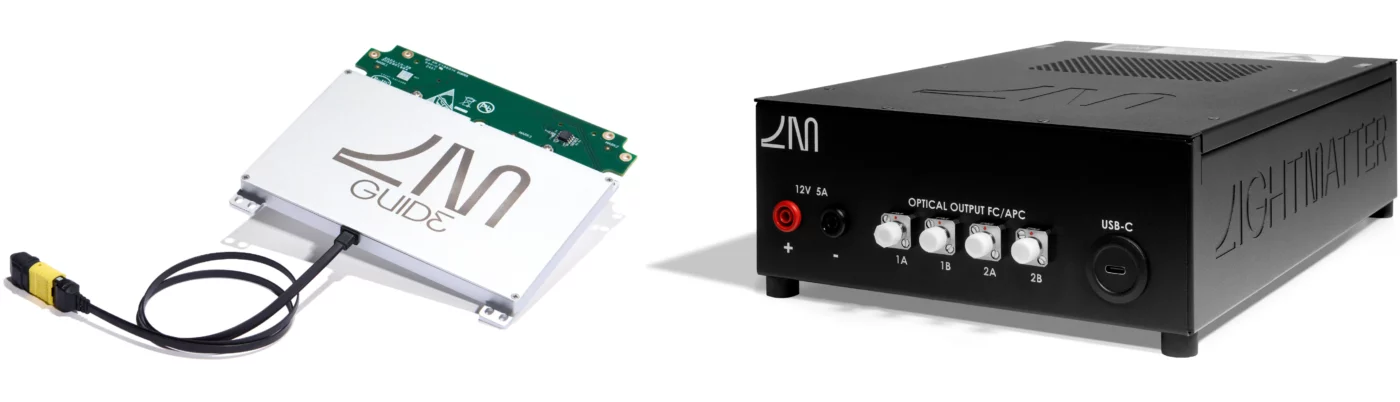

Lightmatter® is leading a revolution in AI data center infrastructure, enabling the next giant leaps in human progress. The company’s groundbreaking Passage™ platform—the world’s first 3D-stacked silicon photonics engine—and Guide®—the industry's first VLSP™ light engine—connect thousands to millions of processors. Designed to eliminate critical data bottlenecks, Lightmatter’s technology delivers unprecedented bandwidth density and energy efficiency for the most advanced AI and high-performance computing workloads, fundamentally redefining the architecture of next-generation AI infrastructure. Visit www.lightmatter.co to learn more.

Lightmatter, Passage, Guide and VLSP are trademarks of Lightmatter, Inc. Any other trademarks or registered trademarks mentioned in this release are the property of their respective owners.

Contacts

Media Contact:

Lightmatter

Katie Maller

press@lightmatter.co

Inside Lightmatter's wide and parallel silicon photonics wins

OCI MSA v1.0 is out, but Lightmatter didn't wait for a spec. They’ve been building this architecture for years.

*3 generations of Passage silicon

*World’s 1st 800G single-fiber BiDi link

*World’s 1st 1,600Gbps/fiber chip with Qualcomm

*Guide laser sampling now

DWDM is the Future of Scale-Up Networking

By Nick Harris, Founder and CEO, Lightmatter

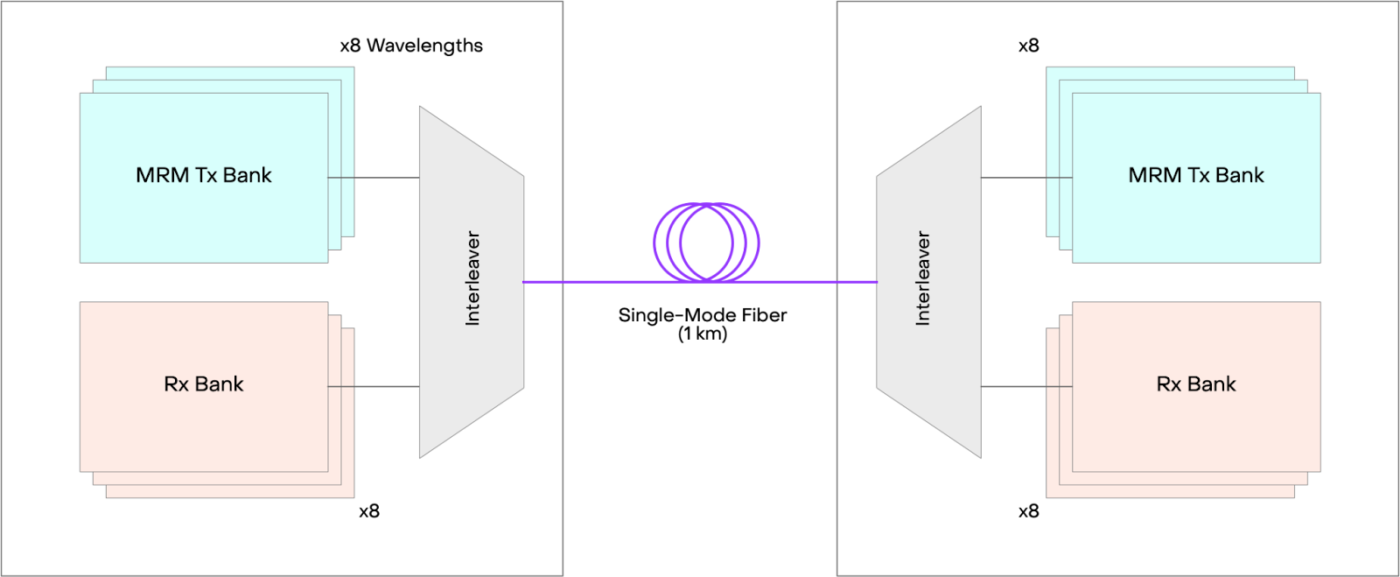

Last week, the Optical Compute Interconnect (OCI) Multi-Source Agreement (MSA) published its v1.0 line interface specification—a 24-page document co-authored by NVIDIA, Meta, Microsoft, OpenAI, Broadcom, and AMD that defines the optical physical layer for AI scale-up interconnects. It specifies Dense Wavelength-Division Multiplexing (DWDM) wavelength channels in the O-band (around 1300 nm) on a single bidirectional (BiDi) fiber, micro-ring resonators for modulation, and an external laser source architecture.

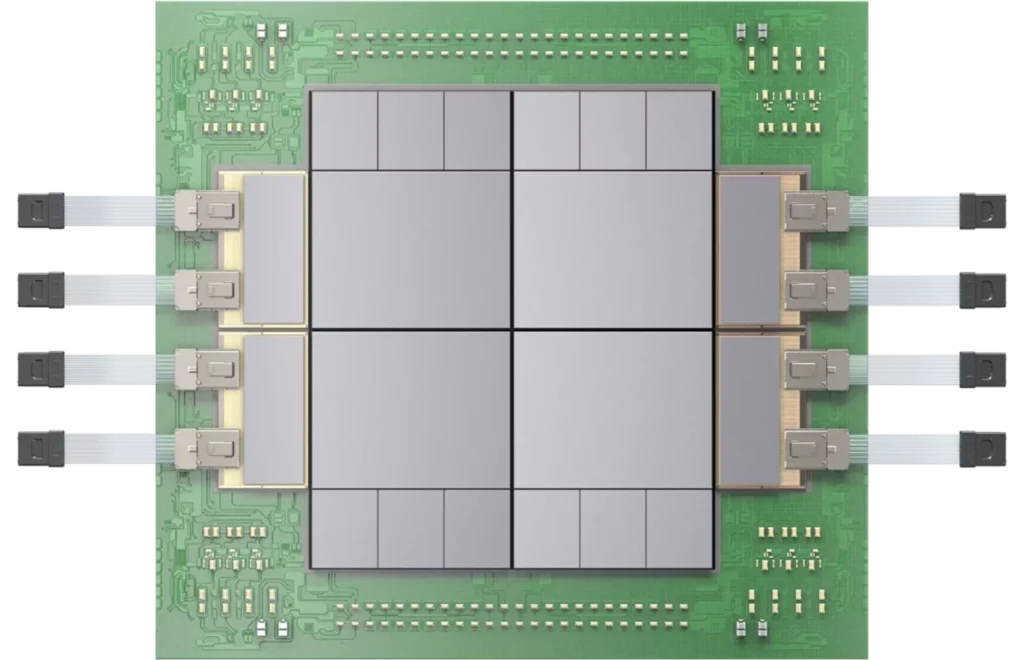

This convergence confirms that the industry is aligning on a path guided by fundamental physical constraints and first principles. At Lightmatter, we have three generations of DWDM PassageTM interconnect silicon that meet the MSA specification, racked and stacked in our validation data centers, along with our Guide® external laser source (ELS), based on our Very Large Scale Photonics (VLSPTM) technology, to power them. We’re demonstrating these record-setting platforms at OFC this week—stop by our booth!

Start from First Principles: BiDi and DWDM

In an AI scale-up fabric, radix is everything. The number of direct connections each accelerator can make to its peers determines collective bandwidth, all-reduce performance, and ultimately how fast your model trains. More connections, better cluster. You want as many as you can possibly run.

The problem is the fiber plant—the cables, connectors, and routing hardware that ties the whole cluster together. Every fiber is a cost, a failure mode, and a hard physical constraint. At some point, the physical density of the fiber plant becomes the binding constraint—not compute, not memory, not the silicon. The interconnect fabric inside an AI cluster is already one of the most fiber-dense environments humans have ever built, and it gets dramatically worse as clusters scale from tens of thousands of accelerators to hundreds of thousands.

The first unlock is bidirectional fiber. A conventional optical link uses one fiber for transmit (TX) and one for receive (RX)—so every logical connection consumes two fibers. Run TX and RX in the same fiber using separate wavelength bands, and you’ve immediately doubled the number of logical connections your fiber plant can support. Same cables, same connectors, same routing. Twice the radix.

The second unlock is DWDM. Once you’re running BiDi, the question becomes how much bandwidth you can carry on each of those links. For short-reach, high-density cluster links, the answer isn’t higher-order modulation. PAM4 and PAM8 pack more bits per symbol, but the tighter eye opening drives up your pre-Forward Error Correction (FEC) Bit Error Rate (BER) floor—which means you need stronger FEC (with higher power consumption) to close the link, which adds pipeline latency in the SerDes. In AI scale-up, where collective operation performance is latency-sensitive, and customers are actively minimizing FEC overhead, that’s a real tax. The bill for PAM4 gets worse as you scale the cluster and the latency budget tightens. You’re paying in power, silicon area, and latency. NRZ per DWDM channel sidesteps all of it: the link budget at these distances is forgiving, the receivers are simple, and you can run minimal FEC.

The OCI spec defines exactly this architecture. Group A wavelengths (1308–1315 nm) travel in one direction; Group B (1328–1335 nm) travels the other way on the same fiber. Four DWDM channels per group, each at 53.125 Gbaud NRZ, aggregate to 212.5 Gbps per direction per fiber. BiDi doubles your radix. DWDM maximizes the bandwidth of every link. Together, they’re the right answer from first principles.

Three generations of Lightmatter’s Passage hardware in silicon today support up to 16-wavelength DWDM bidirectional links from 53.125 Gbaud NRZ to 53.125 Gbaud PAM4 (106.25 Gbps).

We already have hundreds of Passage platforms spanning three generations operating on this exact architecture in our validation data center. In 2025, Lightmatter demonstrated 800 Gbps on a single fiber with a 16-wavelength, 53.125 Gbaud NRZ, bidirectional link—the world’s first at that milestone. In another step forward, we also sampled a breakthrough 1,600 Gbps-per-fiber, 16-wavelength, 53.125 Gbaud PAM4 Passage platform that we built in collaboration with Qualcomm.

Micro-Rings and the External Laser Source

The OCI spec also specifies the modulation technology: cascaded micro-ring modulators (MRMs) driven by an external laser source (ELS). This is worth unpacking because it reflects a fundamental design philosophy that we’ve long believed is correct for co-packaged optics at scale.

MRMs are extraordinarily compact and power-efficient. A ring resonator is a wavelength-selective filter—it couples light from a bus waveguide into a ring when the ring circumference is resonant with the optical wavelength. By applying a bias to a heater or junction to tune the ring, you switch it on and off resonance, which modulates the light. The device footprint is tiny, the drive voltage is low, and you can pack them densely on a silicon photonic chip.

The barrier to entry for MRMs is high. Reaping their power efficiency and area density advantages demands serious engineering effort in stabilization, sequencing, and monitoring. MRMs must be made resilient to rapid thermal transients—800°C/sec and beyond—and require intricate sequencing for multi-wavelength operation, in which unique rings must be assigned to specific wavelengths. Even with all of that working, there’s another layer: firmware validation at scale. Firmware that works on a handful of systems reveals entirely new failure modes at hundreds of systems. At Lightmatter, we’ve built deep expertise in MRM control and sequencing over 8 years and multiple generations of silicon. For new entrants, building a chip with MRMs is the first step on a multi-year journey—one with spectacular upside over every other modulation technology.

Guide 1 is sampling today, features unprecedented wavelength accuracy and stability, and already supports 16 wavelengths at 200- and 400-GHz spacing.

The catch is that lasers and hot silicon don’t mix. A co-packaged optical engine sits directly on or next to a GPU or switch — one of the hottest components in the data center. Laser reliability drops dramatically with temperature, and laser output power also decreases, reducing wall-plug efficiency. And when a laser does fail — and they do — you need to be able to replace it without pulling the whole compute package. The external laser source solves all of this by physically separating the laser from the heat source. The ELS operates off-chiplet, feeding continuous-wave light into the optical engine via a single-mode fiber. The rings do the modulation. The ELS spec requires narrow linewidth (≤1 MHz), high SMSR (≥30 dB), and tight wavelength accuracy across all eight channels — because the laser’s job is to be a stable, replaceable, thermally isolated light source, nothing more.

Guide is Lightmatter’s ELS based on VLSP technology — a single photonic chip integrating hundreds of lasers, purpose-built for co-packaged optical systems. The industry has long relied on assembling individual laser diodes on submounts with epoxy and wire bonds, including lenses and isolators — discrete, fragile, and expensive to manufacture at scale. Guide 1 changes that: hundreds of lasers on a single chip, no glue joints, far fewer failure points.

Why This Moment Matters

Lightmatter’s portfolio spans the full link architecture roadmap—from 112G and 224G PAM4 links shipping today to the massively parallel DWDM architectures that scale-up fabrics are moving toward.

Industry specifications matter because they coordinate investment across a supply chain. When NVIDIA, Meta, OpenAI, Microsoft, Broadcom, and AMD agree on a common optical physical layer, that signals to every ODM, every fiber vendor, every connector manufacturer, and every optical engine startup that there is a real market to build toward. The OCI MSA spec is that signal.

For Lightmatter, this MSA represents alignment with a strategy we’ve been driving since the company’s founding. The Guide and Passage product lines were designed around the assumption that DWDM, co-packaged optics, and external laser sources would become the standard architecture for AI scale-up. We reasoned from physics, built the hardware, demonstrated the records, and engaged the ecosystem.

Learn more about Lightmatter’s Guide laser platform and Passage interconnect at lightmatter.co. The OCI MSA specification is available at oci-msa.org.

Disruptive Photonics innovator Lightmatter achieves record 1.6 Tbps per fiber to accelerate AI Optical Interconnect

The strategic collaboration combines Qualcomm's high-performance connectivity with Lightmatter's photonic engine to achieve an 8X bandwidth-density advantage

Lightmatter, the leader in photonic (super)computing, today announced a historic milestone in AI interconnect performance: the successful sampling of Passage™ Co-Packaged Optics (CPO) chiplet, demonstrating a record-breaking 1.6 Tbps throughput per fiber. By leveraging a 16-wavelength DWDM (dense wavelength division multiplexing) architecture at 112G per SerDes lane, this platform provides up to 8X more bandwidth per fiber than existing Near-Packaged Optics (NPO) and CPO solutions.

This integration of Lightmatter's industry-leading Passage photonic engine with Qualcomm Technologies’ advanced 112G PAM4 optical SerDes chiplet creates the first photonic interconnect architecture to reach this unprecedented bandwidth density. The result is a silicon-proven, high-volume ready technology built for hyperscaler deployment that directly addresses the interconnect bottleneck limiting frontier AI model scaling. As generative AI clusters grow, this architecture reduces power consumption over existing interconnect technologies while enabling both scale-up and scale-out connectivity for next-generation XPUs and switches.

Building on the initiative established with Alphawave Semi—now part of Qualcomm Technologies—this collaboration sets a new industry standard for optical bandwidth density and power efficiency, advancing next‑generation AI infrastructure performance. By maximizing throughput per fiber, this architecture sharply increases system throughput while reducing the fiber count required in the data center, streamlining cable management and lowering optical infrastructure costs. The results demonstrate that Passage L‑Series photonic engines are on track to deliver 100 Tbps and beyond for next‑generation XPUs and switches, with industry‑leading power efficiency.

First Edgeless I/O 3D Co-Packaged Optics

Passage™ L200 eliminates the shoreline bottleneck. By stacking the photonic engine with the electronic IC, we deliver 32-64 Tbps of optical I/O per chip—enabling bandwidth densities 5-10x greater than conventional solutions.

“The successful validation of our 112G/106G PAM4 connectivity technology within the Passage L-Series CPO architecture is a critical step forward for the industry," said Tony Pialis, Executive Vice President and General Manager, Qualcomm Technologies, Inc. "As generative AI models grow in size and complexity, they demand a fundamental shift in data movement. Our strategic work with Lightmatter meets this challenge head-on by providing a clear path to 100 Tbps of total I/O per package. We are focused on delivering the best-in-class power efficiency and throughput required to build the world’s most advanced AI superclusters."

"By combining Qualcomm Technologies’ industry-leading 112Gbps SerDes with our Passage L-Series technology, we have achieved a record-breaking 1.6 Tbps per fiber," said Nick Harris, Ph.D., Founder and CEO of Lightmatter. "This milestone proves that our photonic engines are delivering the massive throughput and power efficiency that hyperscale data centers demand today to overcome the physical limitations of traditional copper and legacy interconnects. We are providing a validated interconnect foundation that moves the industry beyond the levels of existing performance and into high-volume, high-performance CPO for the AI era."

"The industry has reached a point where incremental bandwidth density improvements are insufficient to keep pace with the growth in AI cluster size,” said Vlad Kozlov, Founder and CEO of LightCounting. “By delivering 1.6 Tbps per fiber through a 16-wavelength DWDM architecture, this collaboration between Lightmatter and Qualcomm Technologies represents a significant advancement in addressing fiber cabling and physical space constraints of data center infrastructure. Moving toward a 100 Tbps per-package I/O threshold is a key milestone for the ecosystem in the development of next-generation XPUs and switches."

These record-breaking Passage L-Series photonic interconnects are available through Passage Evaluation Kits for lead customer testing.

Lightmatter will showcase its latest innovations at the Optical Fiber Communication conference in Los Angeles, from March 15-19, 2026, in booth 4817. Qualcomm Technologies' Retimer DSP will be featured at booth 2100 at the event. For more information, please visit https://lightmatter.co/event/ofc-2026/.

About Lightmatter

Lightmatter is leading the revolution in AI data center infrastructure, enabling the next giant leaps in human progress. The company’s groundbreaking Passage™ platform—the world’s first 3D-stacked silicon photonics engine—and Guide® VLSP™—the industry's leading high-bandwidth light engine—connect thousands to millions of processors. Designed to eliminate critical data bottlenecks, Lightmatter’s technology delivers unprecedented bandwidth density and energy efficiency for the most advanced AI and high-performance computing workloads, fundamentally redefining the architecture of next-generation AI infrastructure.

Lightmatter, Passage, Guide and VLSP are trademarks of Lightmatter, Inc. Qualcomm branded products are products of Qualcomm Technologies, Inc. and/or its subsidiaries. Qualcomm is a trademark or registered trademark of Qualcomm Incorporated. Any other trademarks or registered trademarks mentioned in this release are the property of their respective owners.

Contacts

Media Contact:

Lightmatter

Katie Maller

press@lightmatter.co